The following is a transcript of my talk last week at our Mind-at-Large Project’s inaugural conference, “A New Dawn.” Video of all the talks, including this one, will be available in a few weeks on the Mind-at-Large website.

Buenos días, everyone. I’m here at the Casa Oasis Guest House in Mérida, on the ancestral lands of the Yucatec Maya, whose descendants continue to live here on the Yucatán Peninsula. I want to acknowledge that the land beneath me is not some abstract space where human beings can simply plug and play, but a living inheritance shaped by generations of relationships among human beings, and also among animals, forests, waters, and the more-than-human world. In a spirit of respect, gratitude, and reciprocity, I want to honor the enduring presence and sovereignty of the Maya people, past and present.

I want to begin by saying a bit about the aims of this project.

Obviously, as we’ve seen over the last few days, there are widening cracks in the edifice of reductive physicalism. However, most academic researchers still continue to treat mind as a mere byproduct of skull-enclosed electrochemistry. From our point of view here on the Mind-at-Large team, and I assume for many of you joining us, those electrons and neurochemicals are not just dead stuff, nor are they skull-bound. They are ecologically extended, experiential processes in their own right.

So this idea that nature is devoid of mind until brains somehow secrete it, as though that same dead stuff could be transformed into conscious life simply by rearranging it, is not a scientific fact. It is not even, so far as I am concerned, a coherent scientific theory. It is a metaphysical assertion that denies that it is a metaphysics. It is a materialist dogma, and a rather irrational one at that.

The Mind-at-Large Project is our attempt to tear down the wall that physicalism has erected to protect itself from alternative views, views which, as the talks we’ve heard over the last few days have demonstrated, may turn out to be more scientifically justified and more philosophically illuminating than the attachment to reductionistic methods has heretofore allowed us to consider.

If some modicum of mind pervades nature at every scale, from light to life to logic, that is, from photons to cells to human persons, if consciousness is not just the private property of disembodied Cartesian substances but a relational process or an experiential field within which we are embedded, then we are dealing not with a normal scientific problem anymore, not with some narrow technical puzzle, but with a new civilizational charter, really, a broadening of our cultural and even our spiritual horizons.

There have already been plenty of powerful critiques of physicalism, and we welcome more. But we also want to look forward. Our guiding question, as I would frame it, is this: what new theoretical and ethical possibilities open up for us if consciousness is not treated as an illusion to be explained away, but as the inner essence of the cosmos as a whole?

So that is what we are up to with the Mind-at-Large Project. This is just the first year. We are only just inaugurating it, and we are very glad you are along for the journey.

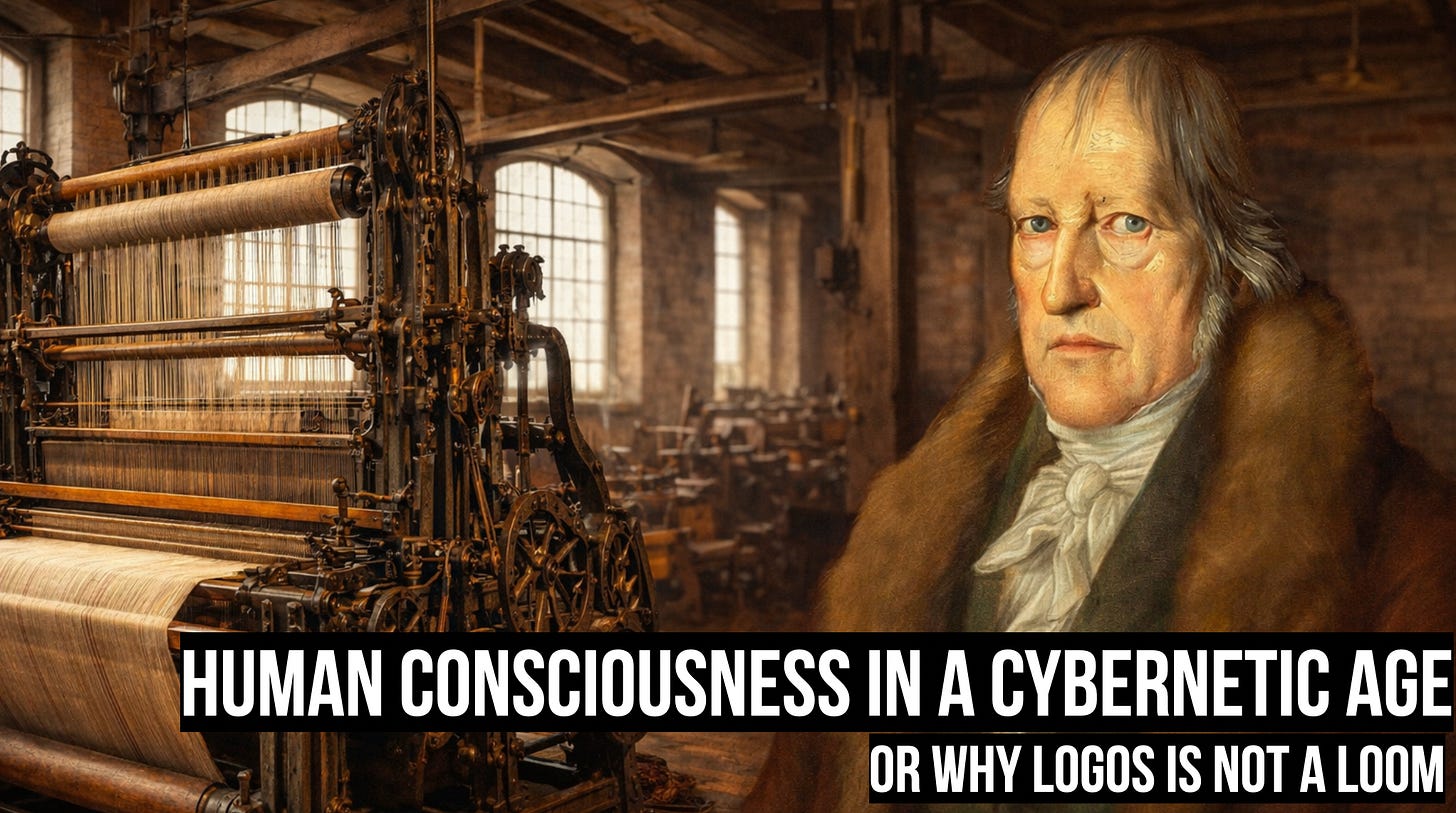

Let me now switch gears and transition to my own talk. The title of my talk is Human Consciousness in a Cybernetic Age, or Why Logos Is Not a Loom. Hopefully you recognize this man. This is Georg Hegel. I’ll speak about him in a moment.

I want to explore what happens to our understanding of consciousness when the dominant metaphor for mind becomes the computer, when cognition is reduced to computation. I want to argue that the consequences of taking this metaphor too literally, the consequences for our understanding of ourselves and the world, are dire.

I think it is a suggestive metaphor, the claim that cognition is computation, but I want to challenge it. Metaphors are not just the shiny paint job on the vehicle of cognition. They are the engine of thought. They drive the limits of conceivability. They shape what we can think and what we cannot think.

I mentioned Hegel because I will be drawing on his early critique of the mind-machine analogy. He was writing in the first part of the nineteenth century. He is a German Idealist, and I would say he remains relevant today in our cybernetic age not despite the fact that he comes from a pre-digital era, but precisely because he comes from a time before we had been all but fully captured by the analogy between mind and machine.

One of the roles of philosophy, as I see it, especially in a technoscientific age, is to help us notice analogies as analogies, thereby allowing us to avoid the kind of misplaced concreteness that might otherwise hijack our thinking.

In our cybernetic age, this metaphor, that cognition is computation, has calcified into something approaching common sense. It is repeated in popular science writing, in neuroscience journals, in AI advertising campaigns, in venture capital rhetoric, in policy documents, and in research funding proposals. We are told that brains are information processors, that memory is a kind of physical storage, that perception is input and action is output. We are told that intelligence is an algorithm for free-energy minimization, that language mastery is a matter of statistical prediction, and that with enough training data and compute, consciousness itself will eventually be artificially engineered.

There are enormous economic and institutional forces reinforcing this picture. Massive tech advertising budgets are now devoted to normalizing the idea that language models like ChatGPT and Claude engage in a form of thought. There are decades of sunk cost in computational neuroscience and AI research that have created powerful incentives encouraging us to imagine that this suggestive analogy is really an established scientific ontology.

The danger here is not merely theoretical. It is not just that we are getting consciousness wrong. It is also existential, because we risk losing sight of the fact that, while machines are being anthropomorphized, we are also increasingly being mechanized.

To be clear, I am not here to rehearse Luddite arguments that we should all destroy our machines. We are here communicating with each other and sharing these ideas because of technology. My argument is not anti-tech. My argument is that we must resist the equation of cognition with computation.

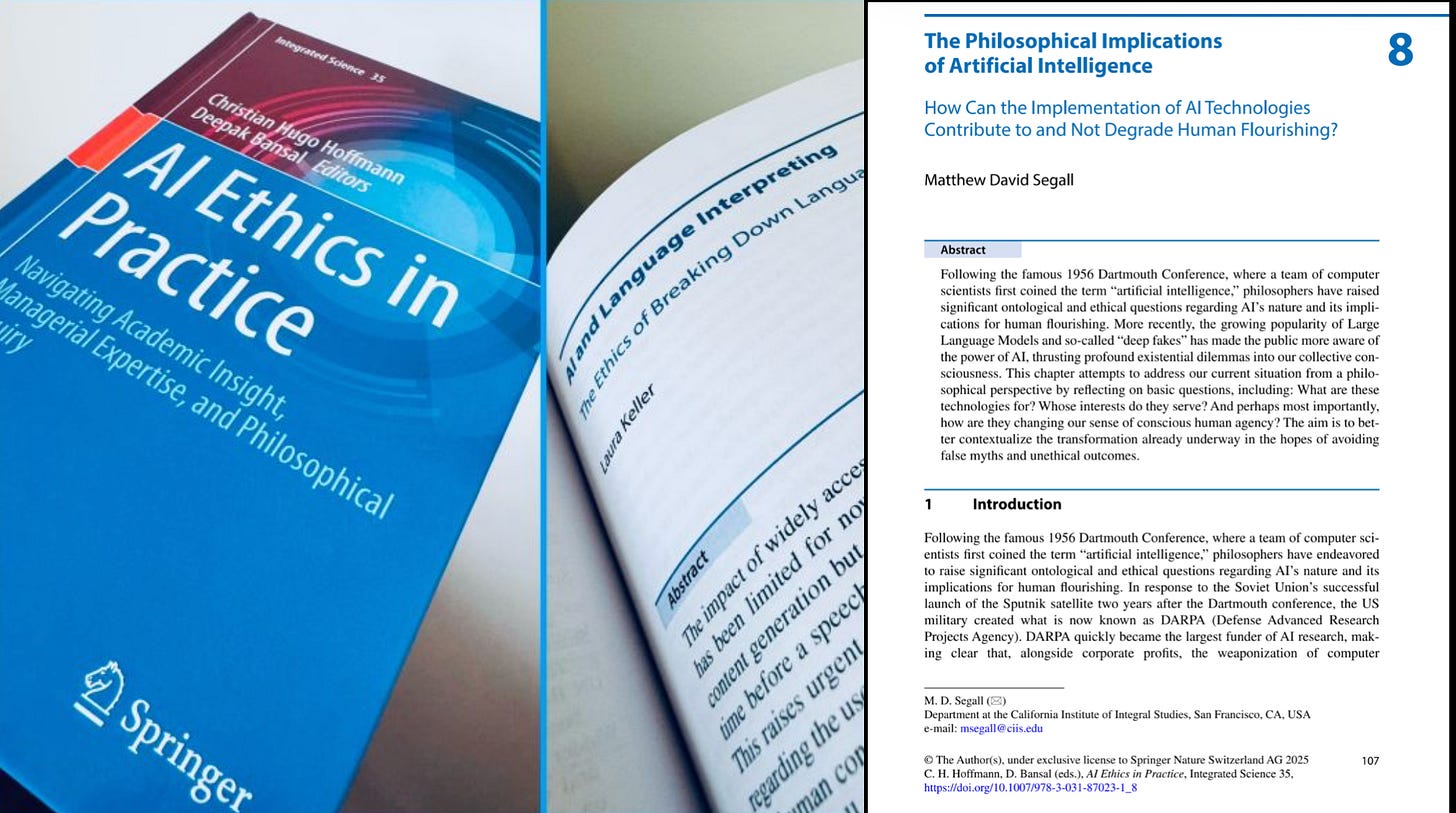

This is not because human intelligence is somehow pure and technology can only pollute it. Quite the contrary. As I argue in a chapter in the book AI Ethics and Practice, titled “The Philosophical Implications of Artificial Intelligence,” human intelligence has always already been artificial in the sense that it has always been extended, augmented, and amplified by our tools, by media technologies, including language itself.

Speech is already an artifact. It is an externalization of thought. The alphabet, print, radio, the internet, all of these are technical prostheses of thought. Human intelligence is already, in some sense, artificial, in that it depends upon the tools our species has been co-evolving with for millions of years.

Our mouths are only nimble enough to speak because stone tools and fire allowed us to cut and cook our food, thereby allowing our jaw muscles to shrink and our brains to grow, filling the extra space in our skulls. Our symbolic culture, as Terrence Deacon argues in his great book The Symbolic Species, has changed our brains. So our intelligence, in that sense, is already artificial. We were cyborgs long before the first microchip was manufactured.

So again, my argument is not that we should reject technology. I am seeking, rather, to caution against the acceptance of false analogies. I will repeat that a few times just to make it clear. There are good use cases for large language models. But the creation of convincing machine mimicries of mind, simulations of autonomous computational intelligence, is not the same thing as creating actual independent machine minds with an inner life of their own. It is a mere machination, a ruse, in other words.

LLMs do not cognize. They do not think, understand, or know anything. They simulate the products of cognition by reweaving the linguistic traces that human acts of cognition have left behind. To borrow and revise a metaphor from Hegel, LLMs are like mechanical looms. They are not incarnations of Logos. They are looms.

I do not have time to give a full introduction to Hegel. He is a thinker who comes in the aftermath of Immanuel Kant’s revolution, his transcendental method of philosophy inaugurated in 1781. Kant wanted to put metaphysics on a scientific basis and understand what the mind provides a priori in shaping our experience of the world before we have experienced anything at all. In other words, Kant wanted to look at the instrument of our knowing in order to understand how it operates and thereby justify our scientific knowledge of the world.

Of course, Kant left us with a kind of epistemological dualism between our experience of phenomena, including space and time and all the objects within them, and the realm of things in themselves, which Kant says lies beyond our ability to know, other than that it exists.

Hegel was not satisfied with that sort of dualism. It is no longer a substance dualism in the Cartesian sense, but it remains an epistemological dualism. Hegel’s point is that, in trying to make metaphysics more scientific, Kant was attempting to begin philosophizing without presuppositions. However, Hegel points out that Kant had already begun with the presupposition that mind is separated from the world, that thought and being exist in airtight compartments apart from one another.

So Hegel, in his Logic, tries to develop an even more presuppositionless mode of a priori philosophizing. He begins with pure being and shows how the very category of being, when we try to think it in its immediacy, is impossible to grasp. It disappears. It becomes non-being. And then non-being itself begins to flicker, and we recognize that being and non-being generate a kind of becoming. Hegel derives a whole series of categories out of this immanent dialectical process of unfolding.

It is in the context of his Logic that he develops a metaphor, or an analogy, for a certain kind of thinking, mechanical thinking, as something like a loom.

Hegel does follow Kant in distinguishing two types of thinking. One he calls Reason, Vernunft in German, and the other he calls Understanding, Verstand in German. Reason is organic. It generates ideas dialectically. It is the capacity to generate categories, as Hegel does in his Logic. Understanding, by contrast, operates with categories already formed and ready-made, as if it found them rather than generating them itself.

So Understanding is mechanical. It rearranges ready-made concepts, whereas Reason is living. It is a self-developing activity rather than a mechanism for externally rearranging fixed concepts. Reason is not a calculator manipulating pregiven data according to formal rules. Reason is self-moving. It differentiates itself, encounters its own limits, negates its one-sidedness, and returns to itself transformed.

The easy division still present in Kant, and still present in anyone who imagines thinking as a mere mechanism, say, a functionalist who separates the form of thought from the substrate of thought, that easy division between form and content, syntax and semantics, hardware and software, is overcome in Hegel’s account of human thinking activity. He wants to begin before any such division.

Reason, for Hegel, does not merely produce ordered outputs. It, or we as rational animals, undergo the transformations we think. What does that mean? Here I can draw on Alfred North Whitehead, who puts it nicely: “No thinker thinks twice.” Whitehead says, “more generally, that no subject experiences twice.” This comes in the early pages of Process and Reality.

That is unlike large language models, which, after their initial training runs and reinforcement tuning, have their numerical weights frozen and locked in place. Otherwise they risk random drift, catastrophic forgetting, and other such problems. LLMs are not continuing to learn when we interact with them.

In Hegel’s Science of Logic, specifically in the second major part, the Doctrine of Essence, Hegel articulates a critique of the Understanding’s external, mechanistic mode of reflection by way of an analogy to a loom, which was, of course, a prominent piece of technology in the Industrial Revolution. For Hegel, this mode of thought takes concepts, he gives the examples of identity and difference, as though they were already-spun thread, raw material already given, and then weaves them together.

From Hegel’s point of view, to imagine cognition as nothing more than a loom weaving the warp of identity and the woof of difference is to reduce thinking to a mechanism that works on already finished materials. The thinker and the object thought remain fundamentally unchanged. No matter how intricate the pattern formed by the threads, the loom’s products may be astonishingly beautiful, but the loom stands at a remove from what it weaves. The producer and the product are separate. The loom combines ready-made materials without inwardly transforming.

I think Hegel’s loom is the perfect analogy for large language models.

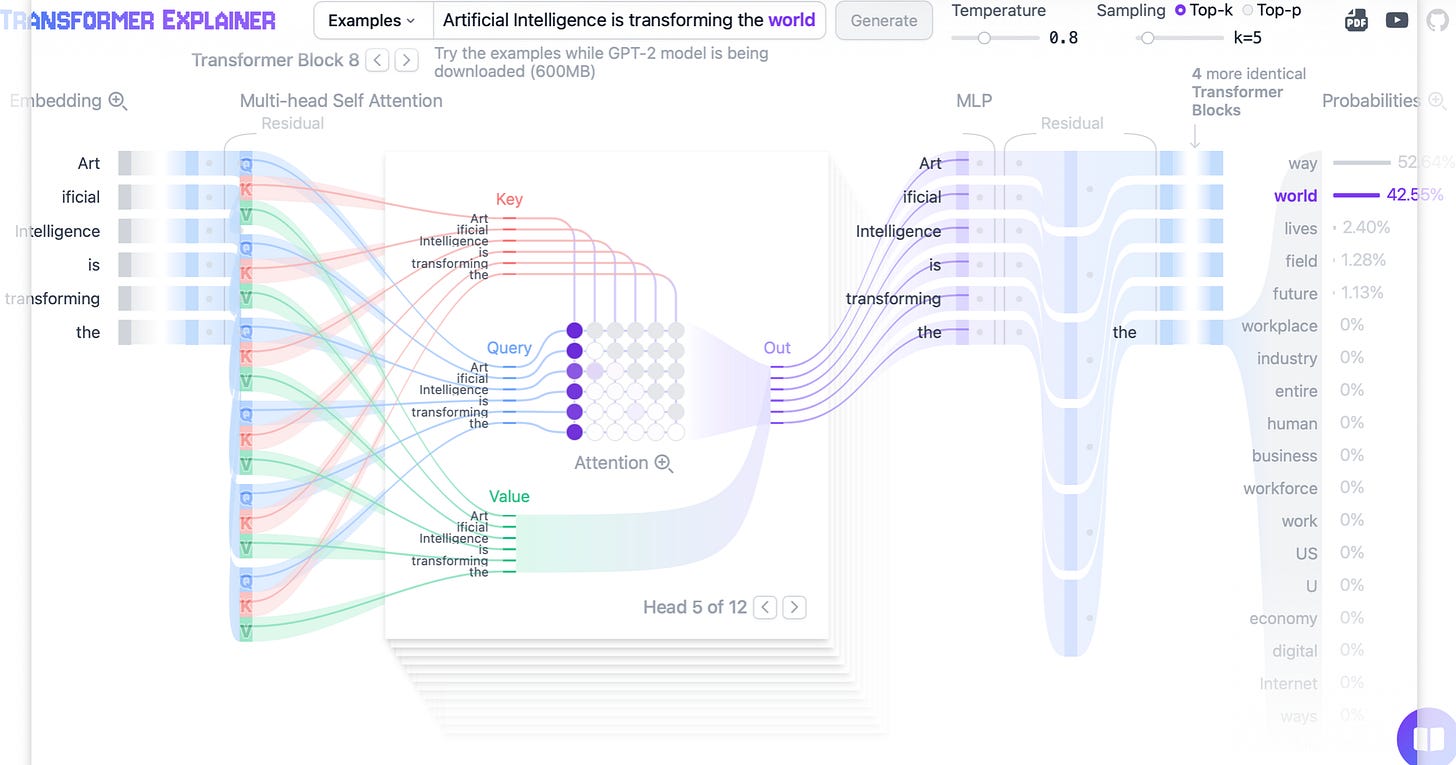

Transformer models, as best I understand them, are machines for weighting relations among symbolic units and generating statistically likely continuations. LLMs tokenize language, embed those tokens in complex mathematical spaces, and compute outputs by statistically reweaving relations learned from vast collections of text originally composed by human beings.

Their architecture and capabilities are astonishing. An LLM can generate discourse that bears a surface resemblance to explanation, judgment, irony, humor, poetry, even speculative philosophizing. But none of this amounts to cognition in the sense that Hegel attributes to Reason. It is the production of formally coherent outputs out of previously deposited materials. It is a woven product, the product of a loom, a textile, or a text.

Because we too sometimes engage reality by way of our machine-like faculty of Understanding, Verstand, we are especially vulnerable to this conflation. I would even suggest that one side effect of overreliance on LLM-generated text is the degradation of our own capacity for Reason through the reinforcement of more mechanical modes of Understanding. We learn to think like machines, and so it becomes no surprise that we are more easily convinced that our machines can think.

Human cognition leaves symbolic products behind like a snake shedding its skin. LLMs are trained on those products. They encode the regularities of their relations and can output new products with similar statistical arrangements. But the complete library of philosophy is not philosophizing. A machine model of language can store, rearrange, and transmit meaningful information, but it cannot create information, nor can it understand meaning. For the full argument at book length, I would point you to Raymond Ruyer’s work on cybernetics.

Large language models can relay and recombine the fossilized and numerically tokenized traces of meaning. They take already expressed meanings and reweave them. They can do this with astonishing range and even finesse. But the astonishingness of the relay of information should not be mistaken for the creation of meaning.

Communication is never just the transmission of a pattern. It involves expressive and interpretive participation in meaning. Listening or reading is just as much a creative act as speaking or writing.

Machines, certainly LLMs, can assist us in our creative processes, but they cannot themselves create. The current generation of LLM tools should therefore be understood not as an independently emerging superintelligence, but as the latest externalization and augmentation of human intelligence, one more chapter in our long coevolutionary history with technology.

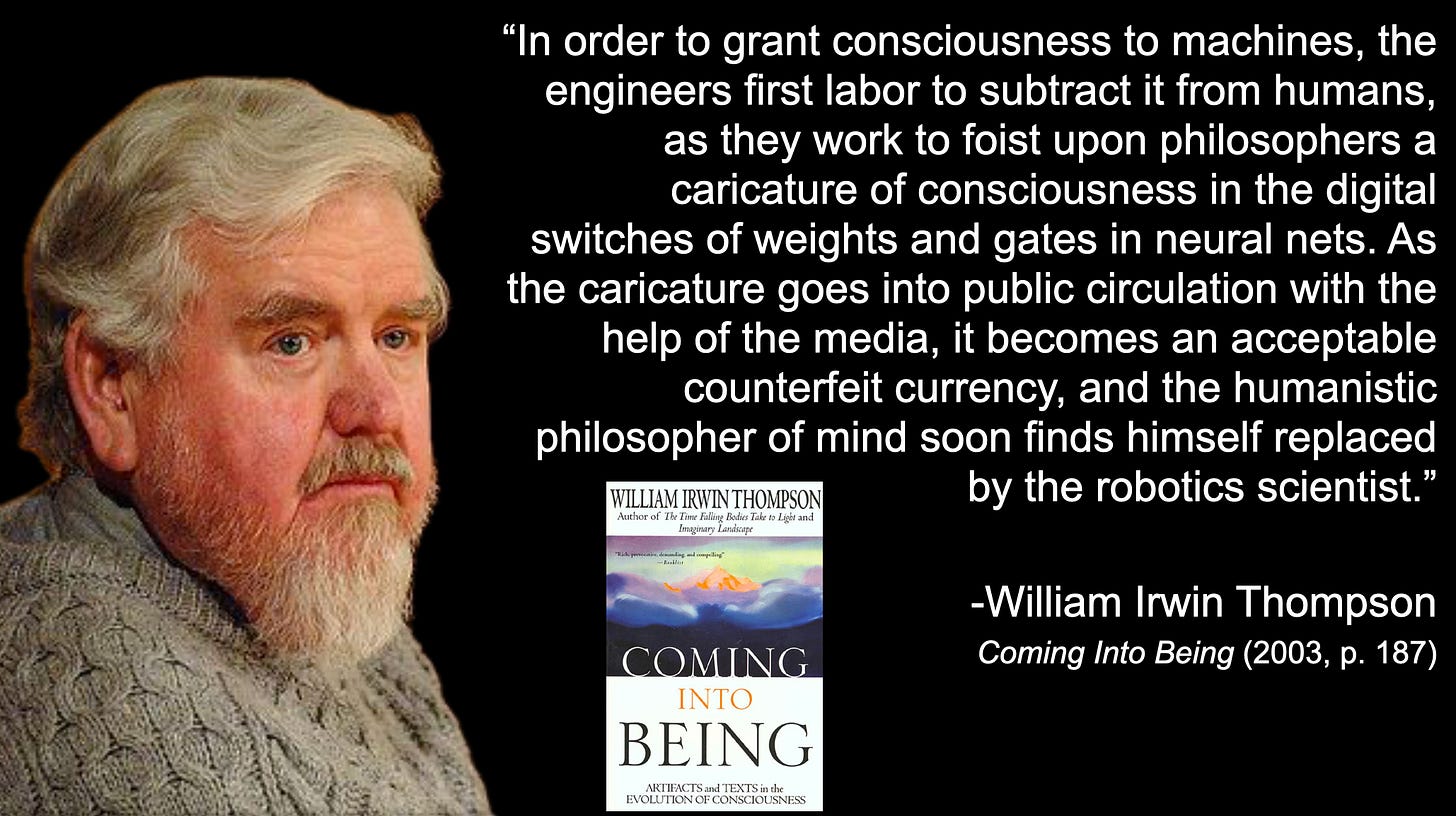

The only way, then, to give subjectivity to machines would first be to take it away from human beings. William Irwin Thompson puts this starkly in his book Coming into Being (2002). He writes:

The point is that human powers of language, memory, and imagination are extracted and uploaded into machine-learning algorithms and then sold back to us in estranged form. We confront our own externalized intelligence as though it belonged to an autonomous agency. We encounter real thought, but in alienated form. The husk of spirit is mistaken for living spirit.

But the loom is not the Logos.

Consciousness is not an inner screen displaying representations. It is not digital software running on neural hardware. It is an embodied, historically haunted, ethically burdened, self-creating, and world-inhabiting activity.

So having said all that, what then is our task as conscious human beings in this cybernetic age? To reiterate, my argument is not that we should refuse technology, but that we should avoid idolatry. We cannot return to some fantastical pretechnical innocence. But what we can do is cultivate deeper discernment by attending to the difference between simulation and participation, the difference between weaving finished threads and the genuinely generative activity of our own thinking.

Our machines will undoubtedly continue to become increasingly convincing mirrors of the outer rind of our minds. But a mirror is not aware of what it reflects. Contrary to the transhumanist fantasies pushed by corporate titans of tech, our task is not to reverse-engineer ourselves into obsolescence. Humanity is not the biological bootloader for a superior species of silicon superintelligence.

The question we face is not whether machines can be made to imitate mind more convincingly. They can, and they will. The question is whether, under the spell of this imitation, we will lose our minds by forgetting how to think.

If there is to be a new dawn for consciousness, I do not believe it will be a function of greater computational power. It will require a renewed love of wisdom and an openness to the more-than-human world, not as a heap of dead objects to be manipulated by our technologies, but as a community of fellow subjects. Then perhaps our technological extensions may further enhance life, and the life of thought, rather than amputating it, serving a more conscious participation in the creative advance of the universe.

Thank you for your attention, and I welcome your questions and comments.

Q&A

I think anyone in the tech world who is willing to question the very premise that would lead to the idea that a complicated enough machine, or a language model, or any machine-learning algorithm given enough training data, or whose nodes and weights are arranged in just the right way, would somehow spontaneously generate consciousness, that is already a useful step. I am only trying to amplify those points of view and bring a bit more respectability to the position that one can be, as I tried to articulate, open to the coevolutionary dynamics between human beings and technology without thereby affirming the possibility of autonomous machine consciousness.

I do not think AI is something we should respond to by breaking into server farms and destroying machines. I think there is a certain inevitability to the development of technology, though I also think there are changes that could be made, perhaps naturally, to our political economy. Much of the danger of AI, in my view, comes not from the possibility that it will suddenly become superintelligent and turn us all into paperclips, but from a further intensification of the same sort of capitalist extraction and externalization that has driven the modern economy for centuries. That is the danger: that AI amplifies the extractive power of capital.

My hope is that we can begin to think of these technologies more as a commons, because with large language models it is quite obvious that there has been a kind of cognitive enclosure analogous to the land enclosures that allowed capitalism to get up and running. These technologies work because they have harvested the collective intelligence of human beings who shared their thinking capacities in the production of the text used to train them. So this is a cognitive commons. My hope would be that as these technologies continue to roll out, the privatization of knowledge becomes impossible.

In terms of physicalism, no, I do not accept the Copenhagen interpretation of quantum mechanics. I think decoherence happens whether a human observer is there to look or not. I am more partial to, say, the transactional interpretation of quantum physics. I do not know that Ruth Kastner would put it exactly this way, but from a Whiteheadian point of view, if we attribute experience to energetic processes from top to bottom across the universe, then the universe is observing itself. It does not need to wait for a human being to look. The moon is there because moondust feels what it is like to be the moon, to put it colloquially.

Certainly, to the extent that there are electrons running through the transistors and gates in these microprocessors, if our ontology suggests that every actual occasion has both a physical pole and a mental pole, then there is some extent to which those electrons are tapping into fields of possibility. But they are heavily constrained by the architecture of the silicon wafers through which they run, and also by the weights and the training regime that shape those weights.

I do not think there is anything like the living nexus that would allow what Whitehead would call a dominant monad to emerge, where there is a chance for a higher-level unity of experience to arise and then to downwardly affect the behavior of the system. Microprocessors, it seems to me, are largely stuck in the actual, rearranging already formed material, weaving thread that has already been spun.

As a panexperientialist, I cannot deny that there is some experiential texture even in electrons and silicon atoms and so on. The question is whether something like a living personality, or, in Whitehead’s technical terms, the nonsocial nexus we identify with our own stream of consciousness, could arise in a system that is not biological, not engaged in ongoing metabolic activity, not marked by the kind of precariousness required to autopoietically maintain itself at the molecular level moment by moment, and not shaped by billions of years of evolutionary history refining the channeling of feeling into a dominant monad, a horizon of experience arising through the collective effort of trillions of cells. That takes an immense evolutionary history to refine. These machines are brand new. We designed them yesterday. They lack that evolutionary history and context. So it seems very unlikely to me that they could engage in the kind of free exploration of possibility that the human imagination can, or that even a single cell can, given the level of complexity at play there.

There are important distinctions among the various research programs I discussed. My problem with the free-energy principle, active inference, predictive processing, and so on is that they are based on the Bayesian brain idea, which treats the brain as a kind of calculator of statistical probabilities. I am not saying it is not an interesting model. I just do not think the brain is literally calculating probabilities and rearranging priors. That is a way of speaking, one that could help us design machines that mimic lifelike behaviors, but it does not seem to me to capture how the brain, the body, or our physiology actually work.

There is a great deal of hand-waving about how an FEP-style account, the minimization of surprisal, and all the rest are somehow associated with consciousness. But to me it still seems like a mechanistic explanation. That whole process of adjusting priors in light of the accuracy of predictions about sensory input could occur without any experience whatsoever, simply as a chemical reaction or thermodynamic process. There would be no need for consciousness if that were all that was going on. And yet here we are. So again, it is an interesting model, and I study that research closely, but I do not think human cognition can be understood as a form of calculation. That would reduce Reason to Understanding, in the sense I borrowed from Hegel and the German Idealists.

I would advocate for a recovery of the Romantic tradition, not as some naive or childish withdrawal from the advance of scientific understanding, but as a reinterpretation of scientific knowledge, which is more or less Whitehead’s project: to recover the Romantics’ primary metaphor of the organism.

The Enlightenment is a very complex phenomenon, and I do not want to dismiss it as though it simply foisted the machine metaphor upon us. That is partly true, but the Enlightenment also valued individual freedom and autonomy and resisted the idea that the human being could simply be understood by analogy to machines. Even if Enlightenment philosophy largely mechanized nature, it did so partly in order to afford the expansion of human freedom through the application of scientific knowledge to a mechanistic nature. The idea was that this would further liberate human beings.

There is no doubt that technology has brought much good and has eased a great deal of suffering, but it has also created new forms of suffering. That is the issue. So I think the Romantic worldview, the organic metaphor applied to our own identity as human beings and to our self-world relationship, points in the right direction. But it must be balanced with the Enlightenment’s concern for autonomy and freedom, because there are dangers in Romanticism too. There are dangers in the organic conception of society when it slides toward totalitarianism and ceases to respect the freedom of individuals.

Still, we need to recover a sense of our participation in a larger organic process, that the cosmos is in some sense deadened by our mode of attunement, or lack thereof, and that if we can open our eyes and ears and hearts again to the world around us, we may discover that nothing we have learned through scientific investigation in any way refutes the idea of a living universe. All of physics can remain intact and be taken with full seriousness, while we also come to recognize that it is describing a world that is fundamentally alive, describing it in refined, mathematical, precise ways. When we bring our full suite of senses and our imagination back into our perception of the world, it seems to me quite obvious that it is alive.

Whitehead, of course, is an organic realist, not an absolute idealist. I brought Hegel into the presentation mostly because I am teaching him this semester, and while I have not been converted to absolute idealism, every time I spend time with Hegel I appreciate his brilliance more. Whitehead studied the British Hegelian F. H. Bradley and was friends with McTaggart. Whitehead says he tried reading Hegel a few times and did not get much out of it, but he was certainly friends with and influenced by many Hegelians.

In Process and Reality, Whitehead describes the process of concrescence as akin to the development of a Hegelian idea. But he also clarifies that whereas in Hegel reality is composed out of a hierarchy of concepts, in Whitehead’s universe it is a hierarchy of feeling. Whitehead is ultimately a kind of radical empiricist, coming out of the Jamesian tradition. William James, of course, was very critical of Hegel, except when he was on nitrous oxide, at which point he reported that he finally understood Hegel because he had a kind of mind-at-large experience.

Even though Whitehead is a realist, he says that, in the final interpretation, the philosophy of organism approximates the basic view of absolute idealism. What I think he means is that the universe, in all its plurality and particularity, in all its multifariousness, to use one of Whitehead’s favorite words, still exists within a larger divine process.

Whitehead’s God, like every other actual entity, is dipolar. There is the primordial, mental pole and the consequent, physical pole of God. All of our experience exists within that larger dipolar process, which is conscious at least in its physical pole insofar as it relates to each of us in our moment-to-moment experience. So God is not separate from us. God is not a mind-at-large from which we are dissociated. We may not notice most of the time that we are participating in the divine life, but our consciousness contributes to that cosmic consciousness.

At the end of the day, I think what Hegel means by the Absolute and what Whitehead means by God are actually very close, perhaps even the same (non)thing.

As for my own use of large language models, because I do so many podcasts, I primarily use them to clean up transcripts. In terms of workflow, that is genuinely useful. I know I often prefer to read transcripts rather than listen, unless I am driving or something. So that is one use case.

I am a bit worried about their use in education, especially with younger children, because until you get to around eleven, twelve, or thirteen, much of what you are learning is not content alone. You are modeling the character of your teachers. It is about learning how to be a person. You need a human being to exemplify that so that you can absorb the virtues of being a mature, responsible adult by interacting with mature, responsible adults as teachers.

If you sit children in front of screens, even if the AI is tailored to that child’s learning style, you lose the human dimension of character formation. So I am concerned about its use in early childhood education.

We are still figuring out the healthy ways to use these technologies. I think here of Plato in the Phaedrus, where Socrates gets upset about the use of the alphabet and writing, saying that the kids are going to ruin their memories by relying on this new technology that externalizes memory and thereby atrophies endogenous memory. And yet where would we be without literacy? Many would question whether print-based literacy was not precisely the technology that supported the widespread adoption of democracy in earlier periods. Literacy rates are falling, and so is the health of our democracies.

So how LLMs will change human culture, cognition, and consciousness remains to be seen. My hope is that we come into a more responsible relationship with these technologies, and that the eventual outcome will be analogous to what happened with the alphabet. There is already some irony in Plato, one of the most poetic and insightful writers of his time, railing against the very technology he was using so effectively.

What do you think?